AI/ML

The Decision That Shapes Your AI ROI

A CTO I worked with in Johannesburg thought they had cracked it.

They rolled out an AI assistant across internal teams. Early demos looked sharp. Fast answers, decent accuracy, and leadership were impressive. Then reality stepped in. Within a month, the model started surfacing outdated compliance info. Support teams stopped trusting it. Legal-flagged risks. Suddenly, the same system that looked like a win became a liability.

This isn’t unusual.

Across South Africa, whether it’s enterprise AI adoption in Johannesburg, scaling startups in Cape Town, or operations-heavy businesses in Durban, the same pattern shows up. Companies invest in AI. They see promise. Then they hit a wall.

Industry reports consistently suggest that a significant share of AI initiatives never move beyond pilot stages, often due to poor architectural decisions early on.

And most of the time, the issue isn’t the model itself. It’s the approach behind it.

That’s where the conversation around RAG vs Fine-Tuning becomes more than technical jargon. It becomes a business decision. One that directly impacts cost, reliability, and how much your teams actually trust the system.

Globally, I’ve seen enterprises over-invest in the wrong direction. Either they pour money into fine-tuning too early or build RAG systems without thinking through data quality. Both paths lead to friction.

Most teams don’t fail because AI is hard. They fail because they choose the wrong foundation.

So let’s unpack this properly. No theory-heavy explanations. Just what actually works in real deployments.

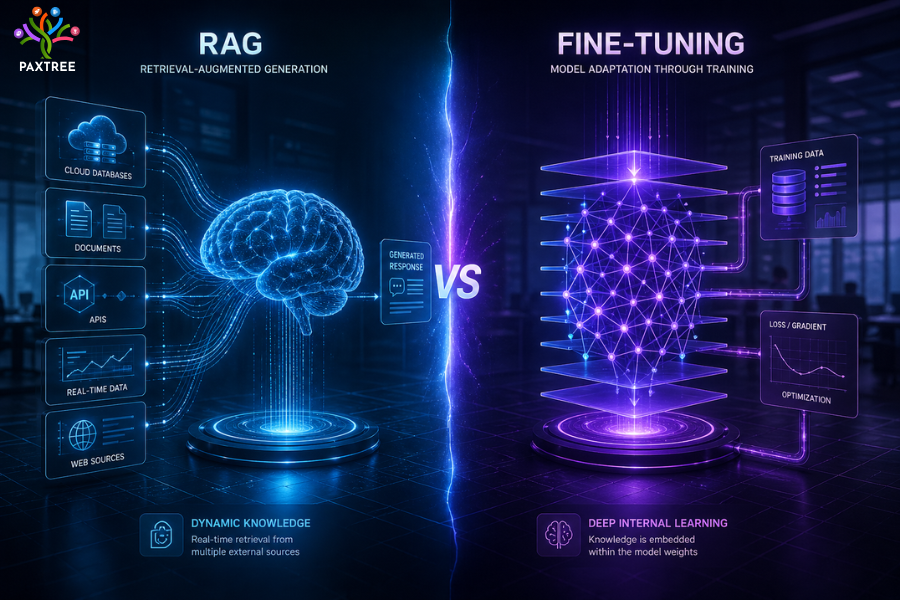

RAG vs Fine-Tuning: Quick Comparison

If you’re looking for a fast answer before diving deeper, here are the key differences and comparison points:

- RAG (Retrieval Augmented Generation) pulls real-time data from your sources, while fine-tuning trains the model itself on static datasets

- RAG is better for dynamic, changing information, fine-tuning works best for consistent tone and structured outputs

- Cost-wise, RAG is lighter to start; fine-tuning requires higher upfront and ongoing investment

- Maintenance favors RAG since updates happen at the data level, not model level

- If you’re asking which is better RAG or fine-tuning, the answer depends on your use case, not the technology itself

RAG = dynamic knowledge.

Fine-tuning = controlled behavior.

This quick view helps, but the real decision comes down to how your business operates day to day.

What is RAG (Retrieval-Augmented Generation)?

At a practical level, Retrieval Augmented Generation is about grounding your AI in real, current data.

Instead of expecting the model to “know everything,” you let it fetch information from your own sources before answering.

Think of it less like training intelligence and more like enabling access.

In most enterprise projects I’ve seen, RAG acts as the bridge between static AI models and dynamic business knowledge.

Real business example

A retail group in Cape Town implemented RAG for internal operations. Store managers could ask questions about policies, pricing rules, or logistics workflows. The system didn’t rely on memory. It pulled directly from updated documents.

When policies changed, there was no retraining cycle. Just updated data.

That alone saved weeks of operational lag.

Benefits of RAG

- Keeps responses aligned with current data

- Eliminates constant retraining cycles

- Works well for document-heavy environments

- Faster to deploy compared to fine-tuned systems

- Easier to scale across teams and departments

Limitations

Here’s the catch.

RAG is only as good as the data behind it.

- Poorly structured data leads to weak outputs

- Retrieval systems need proper tuning

- Slight delays in response due to lookup process

- Doesn’t naturally adapt tone or behavior deeply

On paper, RAG sounds simple. In reality, most teams underestimate the data preparation required. That’s usually where things start slipping. What most teams overlook is that retrieval quality directly defines output quality.

What is Fine-Tuning?

Fine-tuning takes a different route altogether.

Instead of connecting the model to external data, you reshape the model itself. You train it using your own datasets so it behaves in a very specific way.

This is less about access to knowledge and more about shaping behavior.

Practical use case

A SaaS company in Durban wanted consistent onboarding communication across thousands of users. Not just correct answers, but the same tone, structure, and messaging every time.

They fine-tuned their model using past interactions and internal content.

The result? Outputs that felt aligned with their brand without needing external lookups.

Advantages of Fine-Tuned AI Models

- Strong control over tone and output style

- Faster responses since there’s no retrieval layer

- Ideal for repetitive, structured tasks

- Better alignment with brand or domain language

Drawbacks

This is where things get expensive.

- Training requires high-quality datasets

- Retraining becomes necessary as things evolve

- Costs add up quickly, especially at scale

- Not suited for frequently changing information

What usually happens in real deployments is teams underestimate the maintenance overhead. Fine-tuning isn’t a one-time effort. It’s an ongoing commitment. This is where budgets usually get wasted if there’s no clear long-term plan.

Customization feels powerful. Until you have to maintain it at scale.

RAG vs Fine-Tuning: Key Differences

Let’s get into the comparison that actually matters from a business perspective.

If you’re evaluating the difference between RAG and fine-tuning, these are the areas that influence real decisions.

Performance

RAG tends to win when accuracy depends on current information. Fine-tuned models perform better when consistency and tone are critical.

Different strengths. Different trade-offs.

Cost

RAG typically has a lower entry cost. You invest in infrastructure and data pipelines.

Fine-tuning demands upfront investment in training and continuous updates. This is where budgets can quietly spiral.

Scalability

RAG scales by expanding data sources. Add more documents and improve indexing, you’re good.

Fine-tuning doesn’t scale that easily. More data usually means retraining cycles.

Maintenance

RAG is relatively straightforward. Update your data and the system reflects changes.

Fine-tuning needs ongoing attention. Models don’t stay relevant without retraining.

Data Dependency

RAG depends on accessible, structured data systems.

Fine-tuning depends on curated training datasets, which are harder to build than most teams expect.

If you’re evaluating RAG vs fine tuning for enterprises, this is where clarity starts to emerge. It’s less about capability and more about operational fit.

When Should Businesses Use RAG?

If your business runs on information that changes regularly, RAG usually makes more sense.

In most enterprise AI solutions I’ve worked on, RAG becomes the default starting point.

Practical scenarios

- Internal knowledge assistants

- Customer support systems tied to policies

- Compliance-driven environments

- Large document repositories

Industry examples

Banking in Johannesburg

Regulations shift. Policies evolve. RAG ensures responses stay aligned with the latest rules.

Healthcare in Cape Town

Clinical guidelines change. RAG helps teams access updated information without delays.

Retail operations in Durban

Inventory, pricing, and workflows change constantly. Static models struggle here. RAG doesn’t.

Why it works

Because it separates knowledge from the model itself.

You’re not constantly retraining. You’re updating information. That’s a big difference in real-world operations.

If you’re asking when to use RAG vs fine tuning, this is typically the side where RAG dominates.

When Should You Choose Fine-Tuning?

Fine-tuning comes into play when behavior matters more than dynamic knowledge.

And this is where many teams get it wrong.

They choose fine-tuning thinking it will solve accuracy problems. It doesn’t. It solves consistency problems.

Best-fit scenarios

- Brand-specific communication

- Conversational AI with emotional tone requirements

- Domain-specific language or workflows

- Structured output generation

Where RAG falls short

RAG doesn’t shape personality well.

If your AI needs to sound a certain way, respond consistently, or follow strict communication patterns, fine-tuning becomes necessary.

Example

A legal tech platform needed contract drafts that followed a very specific tone and structure. RAG alone couldn’t deliver that consistency. Fine-tuning closed the gap.

Cost Comparison (Business Perspective)

This is usually where leadership teams pause and reassess.

Implementation Cost

RAG requires infrastructure setup. Vector databases, pipelines, integration work. Moderate investment.

Fine-tuning? Higher upfront cost. Data preparation, training cycles, testing. It adds up quickly.

Long-Term Maintenance

RAG is lighter. Update your data sources and the system evolves.

Fine-tuning demands ongoing retraining. Especially if your business changes frequently.

ROI Reality

In most cases, RAG delivers faster returns.

Fine-tuning starts paying off only when:

- Use cases are stable

- Output precision is critical

- Scale justifies ongoing investment

The real cost of AI isn’t implementation. It’s choosing the wrong approach early.

This is why modern AI model optimization strategies rarely rely on one approach alone.

Many organizations working with AI development services or enterprise AI consulting partners already realize this decision shapes everything that follows, from scalability to long-term ROI.

Real-World Use Cases

Let’s ground this in actual applications.

Banking

Financial institutions use RAG to reduce incorrect responses in customer support. Accuracy improves. Risk drops.

Retail

Retailers often combine both approaches. RAG for operations. Fine-tuned models for marketing and communication.

Healthcare

RAG ensures access to updated clinical data. Fine-tuning helps improve patient interaction quality.

SaaS

Most SaaS platforms eventually move toward a hybrid setup.

RAG handles knowledge retrieval. Fine-tuning refines user experience.

This hybrid approach is becoming common in enterprise AI adoption in South Africa and globally, especially among companies investing in scalable enterprise AI solutions.

Common Mistakes Businesses Make

This is where most of the damage happens.

Choosing Fine-Tuning Too Early

Teams assume customization is the priority. It usually isn’t. Accuracy and adaptability come first.

Ignoring Data Quality

RAG systems fail because of messy data. Not because of weak models.

Overengineering

Some teams build complex architectures without clear use cases. It rarely ends well.

Lack of Focus

Trying to solve everything with one AI system leads to mediocre results across the board.

Underestimating Maintenance

Especially with fine-tuned systems. They don’t stay relevant on their own.

Expert Recommendation: What I Tell Clients

If you’re deciding between RAG vs Fine-Tuning, don’t overcomplicate it.

Start with RAG.

It’s practical. Faster to deploy. Easier to adjust. And in most enterprise environments, it gets you 70 to 80 percent of the value quickly.

Then evaluate.

Where does it fall short? Tone? Consistency? Structured outputs?

That’s where fine-tuning comes in.

A practical path

- Define clear business use cases

- Implement RAG for knowledge-driven workflows

- Measure gaps and performance

- Apply fine-tuning selectively

In most enterprise projects I’ve seen succeed, this layered approach works best.

Not because it’s perfect. But because it’s realistic.

Conclusion: Making the Right Call

There isn’t a universal answer in the RAG vs Fine-Tuning debate.

It depends on what your business actually needs.

If your environment is dynamic and information-heavy, RAG is the safer bet.

If precision, tone, and consistency matter more, fine-tuning becomes valuable.

And if you’re building for scale, combining both usually makes the most sense.

The companies getting real value from AI aren’t chasing trends. They’re making deliberate, informed decisions based on how their business operates.

Ready to Build the Right AI Strategy?

If you’re exploring AI for your organization and trying to figure out what actually fits, this is the stage where decisions start shaping long-term ROI.

The difference between a working AI system and a scalable one usually comes down to early architectural choices.

Wait too long or choose wrong, and you don’t just lose time. You burn budget, slow down teams, and create systems people stop trusting.

If you want clarity on what will actually perform in your environment, now is the right time to map it properly.

Talk to our AI experts today to design an AI implementation strategy tailored to your business, avoid expensive missteps, and move from experimentation to measurable results before those costs start compounding.